In contrast, resources managers such as Apache YARN dynamically allocates containers as master or worker nodes according to the user workload. In this mode, we will be using its resource manager to setup containers to run either as a master or a worker node. Spark base imageįor the Spark base image, we will get and setup Apache Spark in standalone mode, its simplest deploy configuration. Then, we get the latest Python release (currently 3.7) from Debian official package repository and we create the shared volume. By choosing the same base image, we solve both the OS choice and the Java installation.

Apache Spark official GitHub repository has a Dockerfile for Kubernetes deployment that uses a small Debian image with a built-in Java 8 runtime environment (JRE).

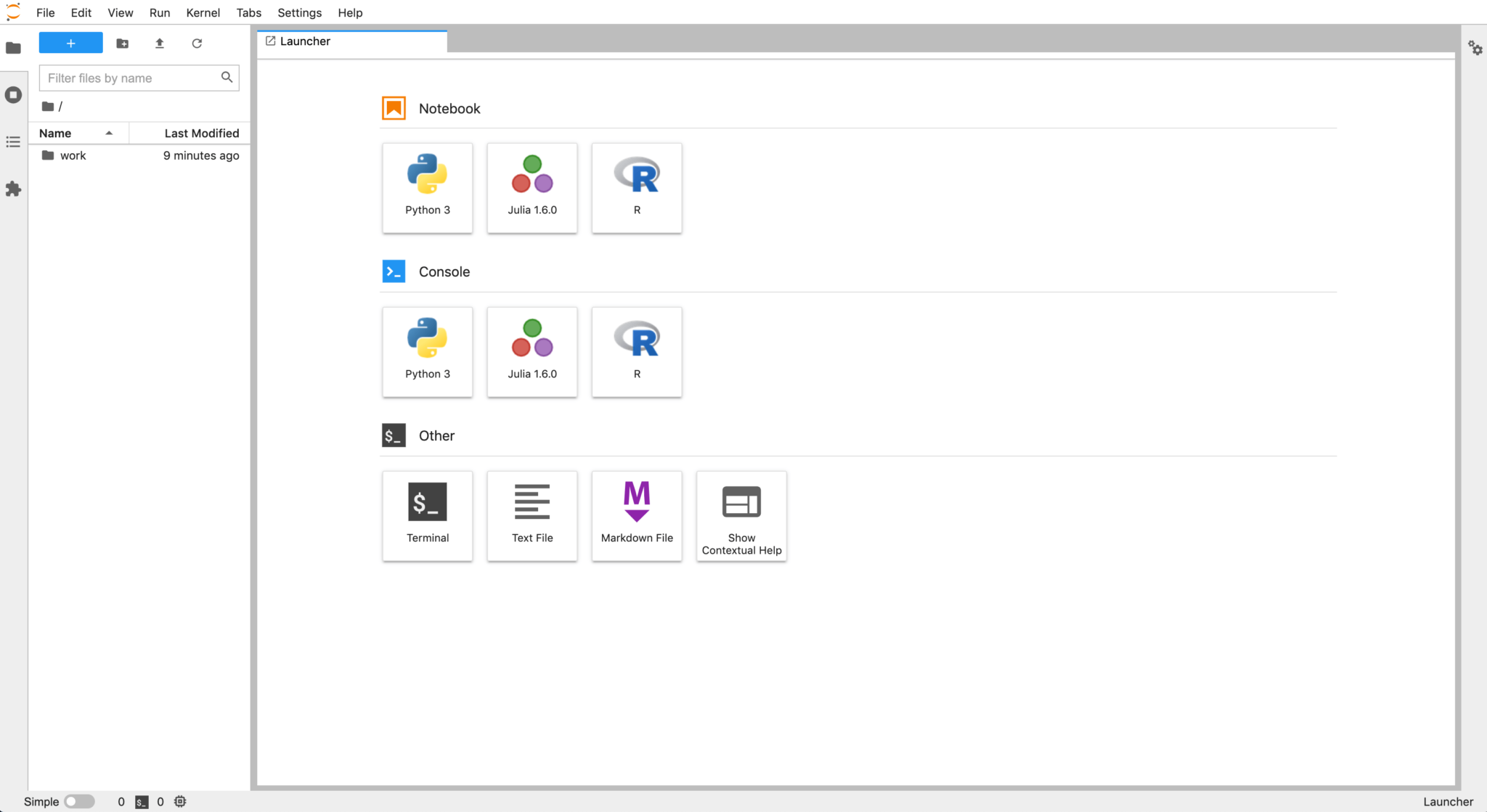

It will also include the Apache Spark Python API (PySpark) and a simulated Hadoop distributed file system (HDFS).įirst, let’s choose the Linux OS. By the end, you will have a fully functional Apache Spark cluster built with Docker and shipped with a Spark master node, two Spark worker nodes and a JupyterLab interface.

#JUPYTERLAB DOCKER IMAGE HOW TO#

In the next sections, I will show you how to build your own cluster. I believe a comprehensive environment to learn and practice Apache Spark code must keep its distributed nature while providing an awesome user experience. Some GitHub projects offer a distributed cluster experience however lack the JupyterLab interface, undermining the usability provided by the IDE. Jupyter offers an excellent dockerized Apache Spark with a JupyterLab interface but misses the framework distributed core by running it on a single container. To get started, you can run Apache Spark on your machine by using one of the many great Docker distributions available out there. With more than 25k stars on GitHub, the framework is an excellent starting point to learn parallel computing in distributed systems using Python, Scala and R. Apache Spark is arguably the most popular big data processing engine.